Cuibit publishes insights from shipped delivery work across web, WordPress, AI and mobile. Articles are written for real buying and implementation decisions, then updated as the stack or the advice changes.

Cuibit Web Engineering

Web architecture and technical SEO team

The Cuibit team covering web architecture, Next.js delivery, technical SEO and buyer-facing product surfaces.

AI Coding Agents in Enterprise: A Governance Playbook for SaaS Teams in 2026

Key takeaways

- AI coding agents are moving from developer side tools to enterprise software infrastructure. The important question is no longer whether teams should try them, but how to govern them without slowing engineering teams down.

- Same-day industry signals point in the same direction: large companies are broadening access to coding agents, enterprise platforms are emphasizing agent governance, and software tools are being redesigned so agents can work through APIs, repositories, and structured context instead of only human-facing interfaces.

- SaaS, ecommerce, and platform teams should treat AI coding agents as controlled contributors. They need permissions, scopes, test requirements, review rules, logging, cost limits, data policies, and rollback procedures.

- The safest rollout starts with low-risk engineering work such as test generation, documentation updates, refactoring suggestions, migration drafts, internal tool improvements, and bug reproduction. High-risk production changes should stay under tighter human review.

- AI agents can improve delivery speed, but only when the engineering system is ready. Weak requirements, poor test coverage, unclear ownership, messy repositories, and missing CI gates will make agents amplify existing problems.

- The winning operating model is not "replace developers." It is "raise developer judgment." Engineers move toward architecture, review, decomposition, evaluation, and release accountability while agents handle more tactical implementation work.

Why this topic matters now

AI coding agents have crossed an important threshold. They are no longer only autocomplete tools that help one developer inside an editor. They are becoming persistent software workers that can inspect a codebase, plan changes, generate code, open pull requests, react to review feedback, run tests, and support teams across product, engineering, QA, and operations.

That shift matters for business leaders because software delivery is no longer only a staffing question. It is becoming an operating-system question: how does the company define work, expose context, approve changes, measure quality, and protect production systems when AI agents participate in the development lifecycle?

For SaaS companies, ecommerce platforms, marketplaces, dashboards, and internal portals, the opportunity is real. Faster issue triage, automated test coverage, safer migrations, cleaner documentation, and more consistent code review can improve product velocity. But the risk is also real. Unreviewed code, hidden security issues, uncontrolled model costs, accidental exposure of sensitive data, and poor architectural decisions can create expensive downstream damage.

That is why AI coding agent governance should be treated as a practical engineering discipline, not a policy document that lives outside the team. Companies investing in web development services, SaaS product builds, ecommerce engineering, or AI automation need an implementation model that makes agents useful inside real delivery workflows.

What changed: from assistant to accountable workflow participant

The first wave of AI coding was mostly individual. A developer asked for a function, a regex, a test, or an explanation. The output was local, temporary, and easy to ignore.

The current wave is different. Coding agents can operate across repositories and workflows. They can be assigned issues, generate branches, produce pull requests, and use tools. Some enterprise teams are standardizing access because unmanaged tool adoption creates fragmented workflows and security concerns. At the same time, agent governance platforms are becoming a boardroom topic because companies need to know which agents are acting, what they are allowed to do, and how outcomes are measured.

This changes the adoption question. It is no longer enough to ask, "Which coding agent is best?" A better question is: "Which engineering tasks can we safely delegate, which controls need to exist around the work, and how do we know quality improved?"

That framing is especially important for teams maintaining revenue-critical systems. A SaaS dashboard, checkout flow, subscription engine, admin portal, API service, or marketplace backend cannot be treated like a toy repository. The agent must work inside a system of tests, reviews, monitoring, and accountability.

The business case: where AI coding agents create value

AI coding agents create the most value when they reduce engineering drag without lowering standards. In practice, that means targeting tasks that are repetitive, well-scoped, and easy to validate.

Useful early targets include:

- writing unit tests for existing logic

- creating regression tests from bug reports

- updating documentation after code changes

- drafting migration scripts for review

- refactoring small modules

- generating API client examples

- improving internal admin tools

- reproducing bugs from logs or tickets

- identifying unused code

- preparing pull request summaries

- checking consistency across similar components

These tasks matter because they often slow teams down but do not always require deep product judgment. An agent can create a first draft, while a human engineer verifies correctness, architecture, security, and business fit.

The value is not only speed. Better test coverage, faster onboarding, clearer documentation, and more consistent review notes improve the whole engineering system. For a company building a custom SaaS platform or enterprise dashboard, that can reduce delivery risk as much as delivery time.

Cuibit's work in custom web development often involves systems where front-end, backend, integrations, admin tools, and data models must evolve together. In that environment, coding agents are useful only when they understand the boundaries of the system and operate under review.

Where AI coding agents can create risk

Coding agents are not dangerous because they write code. They are risky because they can write plausible code quickly. Plausible code can pass a quick visual review while still introducing security, performance, maintainability, or product problems.

The most common risks include:

- insecure data handling

- weak authentication or authorization logic

- broken edge cases

- poor performance in production-sized datasets

- inconsistent architecture patterns

- hidden dependency changes

- brittle tests that only prove the generated code works

- changes that satisfy the issue text but miss the business intent

- untracked cost from long-running agent tasks

- accidental exposure of repository, customer, or credential data

The solution is not to ban agents. The solution is to narrow their scope, improve validation, and make the review process stronger.

A good governance model should answer these questions before agents touch important code:

- Which repositories can agents access?

- Which branches can they modify?

- Can they create pull requests automatically?

- Which files or directories are off limits?

- What data can be included in prompts?

- Which tests must pass before review?

- Who approves production changes?

- How are outputs logged?

- How are security-sensitive changes flagged?

- How are costs monitored?

- How are mistakes rolled back?

If these answers are unclear, the company is not ready for broad agent adoption.

The AI coding agent governance model

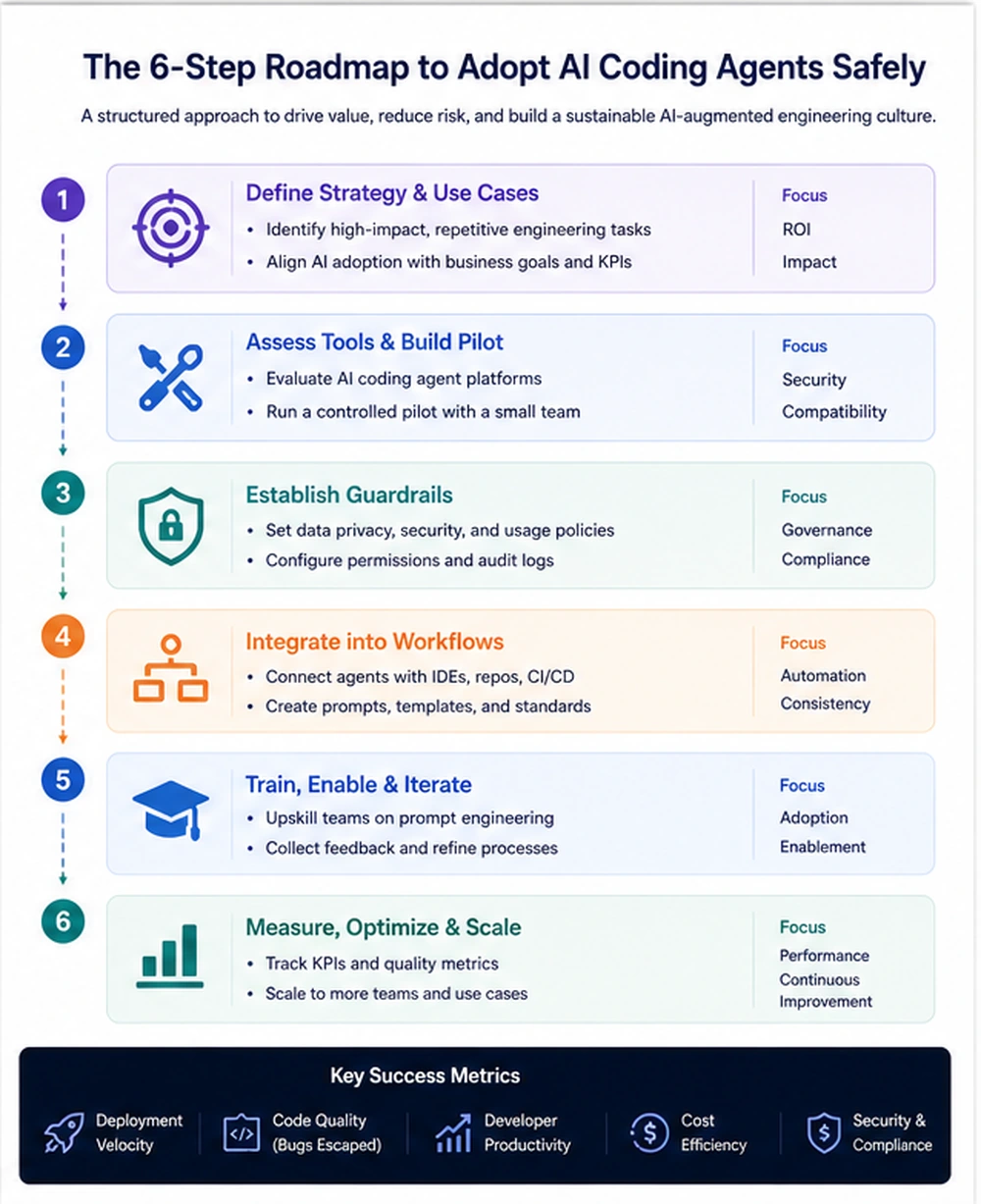

A practical governance model has six layers: strategy, access, workflow, validation, measurement, and continuous improvement.

1. Strategy: define where agents should help

Start with business outcomes, not tool excitement. The goal might be reducing bug backlog, improving test coverage, speeding up frontend changes, accelerating documentation, or helping a small team maintain a larger codebase.

For each goal, define acceptable agent tasks. For example, an ecommerce team might allow agents to draft tests for checkout edge cases but not change payment authorization logic without senior review. A SaaS team might allow agents to refactor UI components but require additional review for permission models or billing code.

Strategy also needs an adoption owner. Without ownership, every team invents its own rules, and the company ends up with inconsistent standards.

2. Access: restrict what agents can see and change

Agents should not receive unlimited access by default. Use least privilege. Start with limited repositories, limited branches, and limited task types. Keep secrets, customer data, and production credentials out of prompts and tool contexts.

For teams using AI agents across multiple services, access design matters. A backend service may include payment logic, customer data, API keys, or security-sensitive workflows. A front-end component library may be lower risk. Treat them differently.

Teams investing in backend development should be especially careful here. Backend systems hold the business logic, permissions, integrations, and data flows that can create serious risk if changed casually.

3. Workflow: connect agents to the existing delivery system

Agents should not operate in a parallel universe. They should work through the same issue tracker, repository, pull request, CI, and release process as human contributors.

A strong workflow might look like this:

- A human writes or approves a clear issue.

- The agent receives a scoped task with constraints.

- The agent creates a branch and draft pull request.

- Automated tests, linting, and security checks run.

- The agent summarizes what changed.

- A human engineer reviews the change.

- Additional review is required for sensitive areas.

- The final merge follows the normal release process.

This workflow preserves accountability. It also makes agent output easier to inspect because every change is attached to an issue, branch, test result, and reviewer.

4. Validation: require tests and human review

Validation is the difference between useful automation and risky automation. Agents should be required to run tests where possible. If tests do not exist, they should often write tests before changing implementation.

However, passing tests is not enough. A weak test suite can approve bad code. Human review remains necessary, especially for architecture, security, performance, and product judgment.

For frontend and product-interface work, teams using React development or Next.js development should add visual checks, accessibility checks, route checks, metadata checks, and performance review to the process. An agent can generate a component, but a human must confirm that the interaction makes sense for real users.

5. Measurement: track quality, not only speed

Many teams measure AI agents by how much code they generate. That is the wrong metric. Code volume can increase while quality decreases.

Better metrics include:

- lead time from issue to reviewed pull request

- percentage of agent PRs accepted after review

- number of review comments per agent PR

- test coverage added

- escaped bugs

- rollback rate

- security findings

- build failures

- cost per useful task

- developer satisfaction

- onboarding time

- maintenance burden after merge

Measure both speed and quality. If agents make teams faster but create more bugs, the operating model needs adjustment.

6. Continuous improvement: create an agent playbook

The best teams will maintain internal playbooks. These should include prompt templates, approved task types, review checklists, repository rules, examples of good agent output, examples of rejected output, security guidance, and escalation paths.

The playbook should evolve as teams learn. Agent adoption is not a one-time rollout. It is a new delivery capability that needs tuning.

A safe rollout plan for SaaS and ecommerce teams

A careful rollout does not need to be slow. It needs to be staged.

Phase 1: Prepare the engineering foundation

Before adding agents, inspect the current system. Are issues written clearly? Is the repository understandable? Do tests run reliably? Are secrets protected? Are code owners configured? Are pull request templates useful? Are deployment pipelines stable?

AI agents do not fix a messy engineering process by themselves. They often expose the mess faster.

If the product has fragile architecture or recurring production incidents, start by improving the foundation. Cuibit's backend reliability rebuild work is the kind of engineering context where governance matters because reliability, observability, and clean architecture become prerequisites for safe automation.

Phase 2: Start with low-risk tasks

Choose tasks that are useful, narrow, and easy to evaluate. Examples include:

- generating unit tests for pure functions

- updating README files

- producing API examples

- documenting component props

- drafting migration notes

- improving lint issues

- creating small internal scripts

- writing bug reproduction steps

Avoid starting with payment flows, authentication, permissions, data deletion, financial calculations, or production infrastructure. Those areas can be agent-assisted later, but not before the governance model is proven.

Phase 3: Require pull requests and review

Every agent change should flow through pull requests. Agents should not push directly to protected branches. The pull request should include a summary, changed files, test evidence, and known limitations.

Human reviewers should be trained to review agent work differently. Do not assume a generated change is correct because it looks polished. Review the requirement, the edge cases, the architecture, and the tests.

Phase 4: Expand by task class, not by enthusiasm

Once the first task class works, expand to another. For example, move from documentation updates to test generation, then small refactors, then UI improvements, then controlled bug fixes.

This is safer than giving broad access to every team on day one. It also helps the organization learn which tasks agents perform well and which tasks still require more human input.

Phase 5: Connect to product and business metrics

Engineering velocity matters, but business value matters more. If agents help ship features faster but product quality suffers, the rollout is failing. If agents improve test coverage, reduce backlog, improve developer onboarding, and keep customer-facing quality stable, the rollout is succeeding.

For SaaS teams, connect agent adoption to release reliability, roadmap throughput, customer issue resolution, and support load. For ecommerce teams, connect it to checkout reliability, performance improvements, catalog operations, and conversion-impacting fixes.

How this changes engineering roles

AI coding agents change the shape of engineering work. They do not remove the need for strong engineers. They increase the value of engineers who can define the right problem, design the right architecture, evaluate tradeoffs, and review output carefully.

The developer role moves toward:

- problem decomposition

- system design

- agent instruction

- test strategy

- code review

- security judgment

- product reasoning

- performance evaluation

- release accountability

Junior developers still need to learn fundamentals. In fact, fundamentals become more important because reviewing generated code requires understanding what good code looks like. A junior engineer who only accepts agent output will not build judgment. A junior engineer who uses agents to compare approaches, write tests, and explain code can learn faster.

Senior engineers become force multipliers when they create patterns, templates, review standards, and architectural boundaries that agents can follow.

Practical examples by team type

SaaS product teams

A SaaS product team might use agents to generate tests for billing edge cases, draft integration examples, refactor repeated UI components, document API behavior, and create internal admin improvements. The team should restrict agent access to billing and permission logic until review standards are mature.

Ecommerce teams

An ecommerce team might use agents to improve product import scripts, write tests around discount logic, document checkout flows, generate QA checklists, and detect inconsistent catalog data. Changes to payment, tax, inventory, and order status logic should receive senior review.

WordPress and WooCommerce teams

A WordPress team might use agents to audit plugin usage, draft custom snippets, document theme changes, create test plans, or refactor template logic. For stores, WooCommerce development requires extra care because checkout, pricing, shipping, and role-based purchasing rules can affect revenue directly.

Internal tools teams

Internal tools are often a good starting point because the users are known and the risk is easier to control. Agents can help build admin tables, reporting views, workflow automations, and import/export utilities. Cuibit's developer tool MVP example is relevant because tooling work often benefits from faster iteration while still requiring careful product judgment.

AI automation teams

Teams building AI automation should think beyond code generation. Coding agents can help implement workflows, but the automation itself still needs process design, data boundaries, fallback behavior, and monitoring. The same governance mindset applies.

The build-versus-buy question

Most companies should not build their own general-purpose coding agent from scratch. The market is moving quickly, and the core tooling is improving. The better investment is usually in integration, governance, workflow design, and measurement.

Buy or adopt existing tools when:

- the team needs standard coding assistance

- repository access can be controlled

- existing CI and review workflows are strong

- the organization wants faster adoption

- model and tool providers already meet security needs

Build custom layers when:

- the company has complex internal workflows

- agent tasks need domain-specific context

- integrations require custom APIs

- governance and logging need to match internal systems

- prompts, templates, and evaluation need central management

- the company needs agents to interact with proprietary tools

For many SaaS and ecommerce companies, the right answer is hybrid: adopt proven agent tools, then build internal rules, templates, integrations, and reporting around them.

A buyer checklist for choosing AI coding agent tools

Before choosing a tool, ask these questions:

- Does it integrate with your repositories and pull request workflow?

- Can access be limited by repository, branch, file path, or task type?

- Does it support audit logs?

- Can it run tests and summarize results?

- How does it handle secrets and sensitive data?

- Can model usage and cost be monitored?

- Does it work with your issue tracker?

- Can it follow project-specific instructions?

- Can outputs be evaluated consistently?

- Does it support enterprise security requirements?

- Can it be disabled quickly if needed?

The best tool is not always the most impressive demo. The best tool is the one that fits your delivery system and risk profile.

Common mistakes to avoid

Mistake 1: Rolling out agents without engineering standards

If the team has weak tests, unclear requirements, and inconsistent code review, agents will not magically create quality. They will produce more output inside a weak system.

Mistake 2: Measuring only speed

Faster pull requests do not matter if they create more bugs. Track review quality, test coverage, escaped defects, rollback rate, and customer impact.

Mistake 3: Giving agents too much access too early

Broad access creates unnecessary risk. Start narrow, learn, then expand.

Mistake 4: Treating generated code as less risky than human code

Generated code should go through normal review. In some cases, it needs more review because the author does not have business context.

Mistake 5: Ignoring cost

Agent tasks can consume tokens, compute, and reviewer time. A task that looks automated may still be expensive if it produces low-quality pull requests.

Mistake 6: Letting every team invent its own approach

Some flexibility is useful, but the organization needs shared rules for security, review, logging, and measurement.

What a mature 2026 setup looks like

A mature AI coding agent setup is not chaotic. It is organized.

Issues are written with clear acceptance criteria. Repositories have instructions for agents. Sensitive files are protected. CI checks are reliable. Pull requests include useful summaries. Reviewers know how to evaluate generated code. Agent usage is logged. Metrics track both speed and quality. The company knows which tasks are approved, which tasks are restricted, and which tasks are not allowed.

The result is not a fully automated engineering department. It is a better engineering system where humans spend more time on judgment and less time on repetitive implementation.

For companies building complex dashboards, SaaS tools, and operational systems, this can become a real advantage. Cuibit's custom React enterprise dashboard work is a good example of the type of product where speed matters, but architecture, data handling, and user experience still need careful senior review.

Editorial conclusion

AI coding agents are becoming part of the enterprise software delivery stack. That creates a major opportunity for SaaS, ecommerce, and product teams that want to move faster without lowering quality. It also creates risk for teams that treat agents as magic developers rather than controlled contributors.

The practical path is governance-first adoption. Define the tasks. Limit the access. Connect agents to issues and pull requests. Require tests. Keep human review. Measure quality. Expand gradually. Improve the playbook as the team learns.

This is not about replacing the engineering function. It is about redesigning the engineering workflow so humans and agents each do the work they are best suited for. Agents can produce drafts, tests, summaries, and routine changes. Humans still own architecture, product judgment, security, tradeoffs, and release accountability.

The companies that succeed in 2026 will not be the ones that simply install the newest coding assistant. They will be the ones that build a disciplined operating model around it.

Need this advice turned into a real delivery plan?

We can review your current stack, pressure-test the tradeoffs in this guide and turn it into a scoped implementation plan for your team.